Current Projects

CPS: Medium: Dig, Sip, Breathe: Automated Monitoring of Carbon and Water Cycles in Agriculture

Automated and robotic technologies for sensing vegetation characteristics and other camera-related indicators are well established, but technologies to sample soil water content, soil organic carbon, and other in-ground indicators require new advances in algorithms, robotics, and sensing technologies that interact with the environment. In addition, these indicators are impacted by other phenomena such as transpiration in air columns and vegetation characteristics in crops. This project will develop an effective cyber-physical monitoring strategy that combines heterogeneous indicators, and tightly couples real-time sensor feedback to more intelligently sample the water and carbon continuum from land and water surfaces to the atmosphere. This involves cyber-physical systems that dig: to measure soil organic carbon and soil water content; sip: to collect information about water runoff; and breathe: to measure atmospheric flux over long time durations to characterize carbon and water cycles in agricultural fields. These 3 processes will, together, allow automated monitoring and verification of carbon and water cycles in agricultural settings.

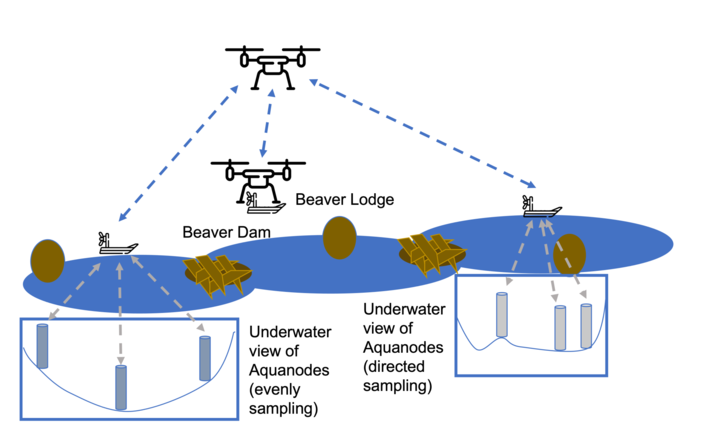

NRI: Collaborative Research: Adaptive multi-Robot Configurable Teams Investigating Changing Ecosystem

This project presents a vision for advancing heterogeneous multi-UxS technologies, practices, and understanding in order to extend the reach of human sensing in difficult-to-access environments while also providing fundamental scientific understanding of a new disturbance regime affecting all aspects of lowland Arctic ecosystems. The vision addresses key goals in the development of integrative robotic systems, such as improvements in UxS capability for sample collection, flexible UxS tasking based on vehicle abilities via observed states, and human proficiency in operation and monitoring. These objectives will be developed in accessible sub-Arctic environments before being refined in yearly tests in harsh, complex tundra contexts, all while contributing to progress in fundamental robotic integration challenges. Teams of complementary flying, floating, and underwater robots developed by the project team have recently gained the ability to perform observations at precise locations and take measurements both above and below the surface of the water. Additional development aid in obtaining water quality, temperature, and underwater mapping measurements, as well as wildlife and ecosystem observations, at remote sites in Alaska.

Deployable Swarms: Decentralized Multi-agent Path Planning and Control

Unmanned aerial systems (UAS) have been used for intelligence, surveillance, target acquisition, and reconnaissance (ISTAR) tasks. Although sensing technology continues to advance, UAS platforms are still subject to size, weight, and power constraints that limit scale and duration of missions. One way to fufill large scale UAS missions is with a multi-agent system (swarm) capable of completing mutiple mission objectives. In this project we develop a real-world deployable swarm testbed for evaluating swarm coordination and control strategies and onboard perception. Large drones in the swarm have onboard camera suites for testing and evaluating advanced perception algorithms. Custom toolchains include a command and control interface, a novel communication backend, and consistent configuration strategy that enables rapid, consistent deployment for a wide array of missions and advanced evaluation of custom algorithms.

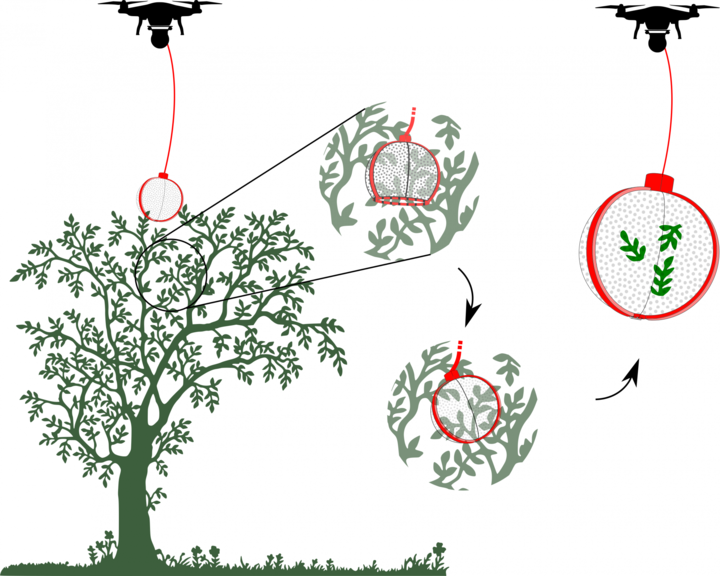

Leveraging Environmental Monitoring UAS in Rainforests

Rainforest canopies are important ecosystems for diverse plant and animal life, however validating the model-based predictions for scientific decisions about these environments is difficult due to a lack of efficient data collection methods. Access is limited due to remoteness, dense foliage, and venomous wildlife, which constrain research to maintained trails and vegetation near the forest floor. Currently, most data is collected within 50 meters of trails and 5 meters from the ground surface due to these limitations, making spatially explicit measurements sparse at best. Unmanned Aerial Systems (UASs) have been used for sensor deployment and monitoring, but only recently has the ability to collect soil samples at precise locations in the ground been developed by the project team. Further development of the system will aid in obtaining soil, water, and leaf samples from coordinated sites to ensure coverage, enabling spatially distributed data collection in areas where it is currently too costly or dangerous.

Past Projects

UAS Digging and In-Ground Sensor Emplacement

Using unmanned aircraft systems (UASs) as sensor platforms is a well-studied and well-implemented area. UASs have even been used to place sensors on the ground or in water. However, there are some instances where placing a sensor on top of the ground is not sufficient. Placing a sensor into the ground is necessary for things like accurate seismic measurements or soil moisture content measurements. We have developed a system that is capable of drilling a sensor into the ground via an auger mechanism and the weight of the UAS.

UAS Prescribed Fire Ignition

Prescribed fires are increasingly being used to combat wildfires and to improve the health of ecosystems by combating invasive species. Yet, the tools available for fire ignition (e.g., hand-tools, chainsaws, drip torches, and flare launchers), are antiquated, placing ground crews at great risk. The use of helicopter-based ignition, a high-risk activity, has helped in scale but the expense makes it inaccessible to most users. We have developed an Unmanned Aerial System for Firefighting (UAS-FF) to enhance fire ignition capabilities, while significantly reducing the risk to the firefighters. The UAS-FF precisely drops delayed ignition spheres and can be used to ignite fire lines in areas that are otherwise too difficult or dangerous to access with traditional methods. We have worked the FAA and fire agencies and are currently in the process of performing the first set of field experiments where the UAS-FF ignites fires as part of larger prescribed burns. This project led to commercialization fo this technology by Drone Amplified.

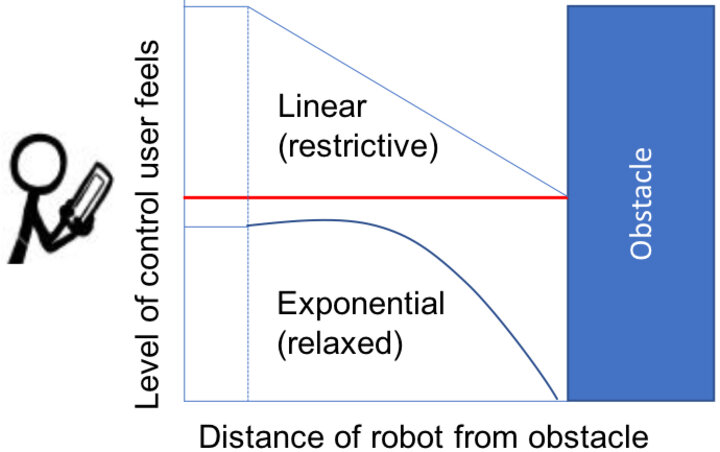

Cyber-Physical & Human Unmanned Aircraft Systems

Most cyber-physical human systems (CPHS) rely on users learning how to interact with the system. Rather, a collaborative CPHS should learn from the user and adapt to them in a way that improves holistic system performance. Accomplishing this requires collaboration between the human-robot/human-computer interaction and the cyber-physical system communities in order to feed back knowledge about users into the design of the CPHS. In this project we seek to infer intrinsic user qualities through human-robot interactions correlated with robot performance in order to adapt the autonomy and improve holistic CPHS performance. We demonstrate through a study that this idea is feasible, use it to predict loss of control events in a CPHS, and provide an extensive corpus to the community for use in CPHS research.

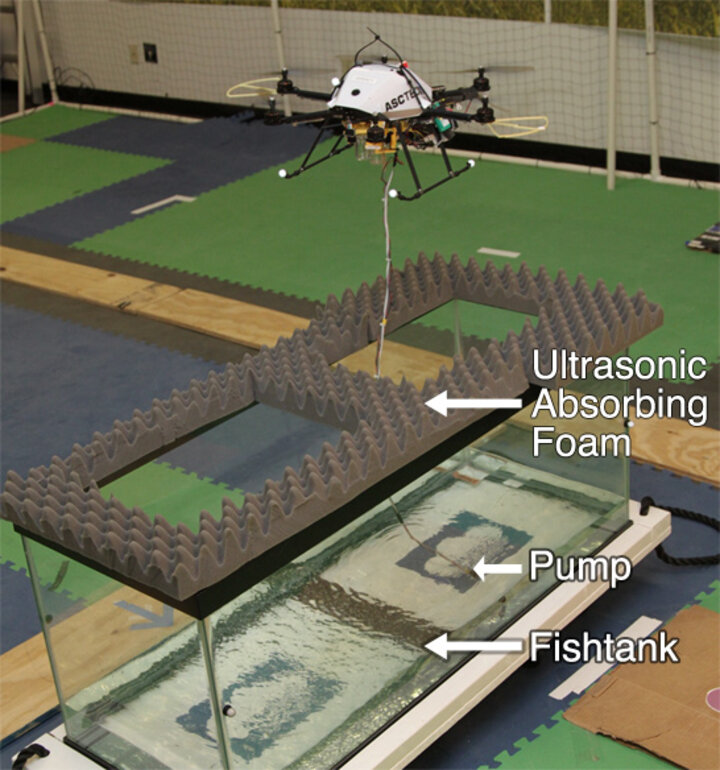

Co-Aerial-Ecologist: Robotic Water Sampling and Sensing in the Wild

The goal of this research is to develop an aerial water sampling system that can be quickly and safely deployed to reach varying and hard to access locations, that integrates with existing static sensors, and that is adaptable to a wide range of scientific goals. The capability to obtain quick samples over large areas will lead to a dramatic increase in the understanding of critical natural resources. This research will enable better interactions between non-expert operators and robots by using semi-autonomous systems to detect faults and unknown situations to ensure safety of the operators and environment.

This research is partially funded as part of the National Robotics Initiative through the USDA National Institute of Food and Agriculture (grant #2013-67021-20947).

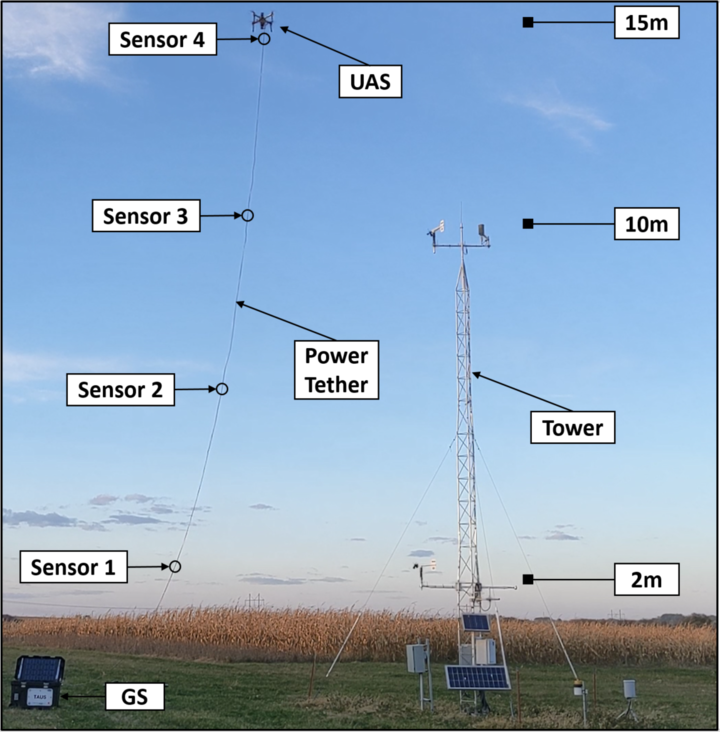

Adaptive and Autonomous Energy Management on a Sensor Network Using Aerial Robots

This research introduces novel recharging systems and algorithms to supplement existing systems and lead to autonomous, sustainable energy management on sensor networks. The purpose of this project is to develop a wireless power transfer system that enables unmanned areal vehicles (UAVs) to provide power to, and recharge batteries of wireless sensors and other electronics far removed from the electric grid. We do this using wireless power transfer through the use of strongly coupled resonances. We have designed and built a custom power transfer and receiving system that is optimized for use on UAVs. We are investigating systems and control algorithms to optimize the power transfer from the UAV to the remote sensor node. In addition, we are investigating energy usage algorithms to optimize the use of the power in networks of sensors that are able to be recharged wirelessly from UAVs.

Applications include powering sensors in remote locations without access to grid or solar energy, such as: underwater sensors that surface intermittently to send data and recharge, underground sensors, sensors placed under bridges for structural monitoring, sensors that are only activated when the UAV is present, and sensors in locations where security or aesthetic concerns prevent mounting solar panels. See the project webpage for more details. This work is partially supported by the National Science Foundation.

Crop Surveying Using Aerial Robots

Surveying crop heights during a growing season provides important feedback on the crop health and its reaction to environmental stimuli, such as rain or fertilizer treatments. Gathering this data at high spatio-temporal resolution poses significant challenges to both researchers conducting phenotyping trials, and commercial agriculture producers. Currently, crop height information is gathered via manual measurements with a tape measure, or mechanical methods such as a tractor driving through the field with an ultrasonic or mechanical height estimation tool. These measurement processes are time consuming, and frequently damage the crops and field. As such, even though crop height information can be extremely valuable throughout the growing season, it is typically only collected at the end of growing season.

ROS Glass Tools

Robot systems frequently have a human operator in the loop directing it at a high level and monitoring for unexpected conditions. In this project we aim to provide an open source toolset to provide an interface between the Robot Operating System (ROS) and the Google Glass. The Glass acts as a heads up display so that an operator monitoring vehicle state can simultaneously view its actions in the real world. In addition to monitoring the project aims to harness the voice recognition of the Glass to allow robot voice control. The project also aims to be easily extensible so it can be used to monitor and control a multitude of robot systems using the Glass. More information on the tools can be found at http://wiki.ros.org/ros_glass_tools.